Large data breaches and leaks now regularly affect even the most seemingly well-guarded organisations and institutions. Over the past five years, a higher volume of data has been released to the public than the previous 50 years put together. This wave of leaks and breaches means that media outlets, the public, and political systems need to decide how best to serve the public interest when data is made available online.

This information can come from many sources:

A data breach is a compromise of security that leads to the accidental or unlawful destruction, loss, alteration, unauthorised disclosure of, or access to protected data. This is often (but not only) as the result of an attack from an outsider. Data dumps from breaches are becoming increasingly common. It can be difficult to distinguish between the two. Examples of data breaches that are assumed to be from outside attacks include things like DC Leaks and the leak of Democratic National Convention emails.

A data leak is a data breach where the source of the data is from someone inside the organisation or institution that has collected that data. This usually takes the form of whistleblowing (the act of telling authorities or the public that someone else is doing something immoral or illegal). A government or private-sector employee who shares data with the public, or a group of individuals that shows that their employer is engaged in what they perceive to be malpractice. While whistleblowing has led to unprecedented exposure of secret and illegal government surveillance, corporate malfeasance and corruption, there is often little transparency about the decisions that determine when, how, and what data is released to the public.

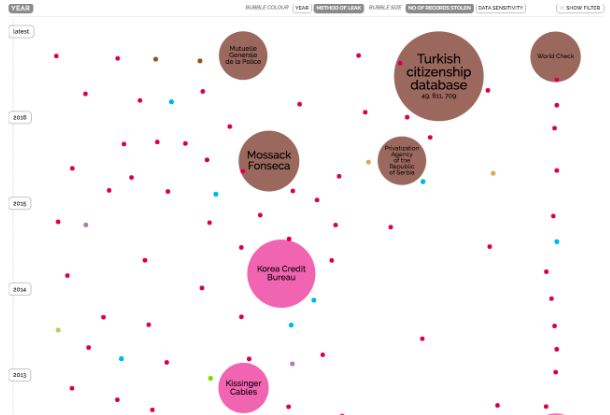

From Information is Beautiful’s visualisation of the world’s biggest data breaches. Brown shows leaks, pink shows ‘inside jobs’.

As a result, there are serious responsible data issues to be grappled with. As with many responsible data grey areas, there are likely few hard and fast rules, but rather questions to be considered and addressed on a contextual basis.

Broadly speaking, responsible data practices for managing and publishing on data leaks from whistleblowers and other sources need to take the following points into consideration (this list is likely not exhaustive!):

- speedy, high quality publication of data leaks relevant to the public interest

- explicit communication about data provenance, governance, and quality taking care to protect sources when relevant

- appropriate planning for preservation and accessibility of large leaks, where relevant (the most obvious being well-organised repositories for preservation and search)

- operational security and residual data that can expose anonymous sources

- explicit principles for responsibly managing, publishing, reporting, and verifying data dumps

- whistleblower policies and media practices that create an enabling environment for whistleblowing and legal protections for whistleblowing

Caring for the people in the data

Data leaks and whistleblowing inherently requires sharing data without the consent of its creators or owners. In fact, in many cases, data owners are often the target of data leaks. However, owners of the data are unlikely to be the only people reflected in a dataset that hasn’t been treated in advance.

Being incidentally included in a particular dataset can have damaging consequences. For example, this summer, Wikileaks published personally identifiable information of women in Turkey. They appear to have been included in what was thought to have been emails related to President Erdogan. As technosociologist and scholar Zeynep Tufekci wrote at the time, there were serious consequences of this release.

“I hope that people remember this story when they report about a country without checking with anyone who speaks the language; when they support unaccountable, massive, unfiltered leaks without teaming up with responsible parties like journalists and ethical activists; and when they wonder why so many people around the world are wary of ‘internet freedom’ when it can mean indiscriminate victimization and senseless violations of privacy.”

The responsible approach to dealing with this data would have been to work to verify the data prior to publishing it online, redacting it to ensure that no sensitive data was included, and establishing that the data was indeed an issue of public interest. Given the size and scale of the data, this is harder than it might sound. And in this case, it was made more difficult by language barriers and lack of adequate context to understand the contents of the leak, prior to it being made available online.

As with all responsible data issues – context is queen. In the case of data leaks, the diverse number of actors and incentives in the chain of data handling make understanding and decision-making in diverse contexts challenging.

Data Leaks and Whistleblowing best practices

With the advent of high profile whistleblowers, much has been written and done to ensure the safety of those carrying out the act of whistleblowing, both in terms of behavioural best practices, legislative protections, and ensuring that technical best practices are possible via platforms like Secure Drop and GlobaLeaks.

But there are others who need to make important decisions about data leaks: publishers and consumers of the data.

Potential users might include journalists (those working within a newsroom, and freelancers), students, researchers, activists, academics, data scientists, and more. The field of journalism is established enough to have various codes of ethics, dependent upon the newsroom or the particular union, but as a younger field, data science lacks this, as identified by Nathaniel Poor in this case study contemplating the use of hacked data in academia:

“Journalists use data and information in circumstances where authorities and significant portions of the public don’t want the data released, such as with Wikileaks, and Edward Snowden… However, journalists also have robust professional norms and well-established ethics codes that the relatively young field of data science lacks. Although cases like Snowden are contentious, there is widespread acceptance that journalists have some responsibility to the public good which gives them latitude for professional judgment. Without that history, establishing a peer-group consensus and public goodwill about the right action in data science research is a challenge.”

A number of efforts have been made to introduce ethics into data science curricula – which addresses part, but not all, of the problem. Not all of the potential users or even hosters of the data, will identify as a ‘journalist’ or a ‘data scientist’. If data is made available through whistleblowing, perhaps different contextual considerations will apply, though lessons can definitely be learned from other, related sectors.

Calls have long been made for data journalists to consider their ethical responsibilities prior to publishing. Writing about the use of big data by academic researchers, Boyd and Crawford write: “it is unethical for researchers to justify their actions as ethical simply because the data is accessible.”

This mantra might well apply to anyone who is thinking of using, or hosting, leaked data – so, what questions should they ask before using that data? Are there red lines that we should never cross, on both the side of the source and the data user – like data on individuals’ bank account numbers, health status, sexual orientation, to name just a few. Might it be possible to agree upon a few, shared lines that don’t get crossed, even in leaks?

Once someone decides to work with data made available through whistleblowing, what responsible data approaches can they take to ensure that no further harm comes to people reflected in that data set? This might involve taking steps to verify the data and making this process transparent, to ensure that others understand where it came from and what the data represents (and doesn’t represent) – or redacting versions of a dataset before publishing it publicly.

Transparency, privacy and protection

Looking at past examples, the approach of radical transparency rarely seems to be the most responsible approach for working with or dealing with data that has been made available through whistleblowing.

Alex Howard and John Wonderlich of the Sunlight Foundation write:

“Weaponized transparency of private data of people in democratic institutions by unaccountable entities is destructive to our political norms, and to an open, discursive politics…. In every case, for every person described in the data, there’s a public interest balancing test that includes foreseeable harms.”

From a responsible data approach, those “foreseeable harms” are exactly what need to be outlined in advance and transparently considered. This might well end up being a controversial topic – as with many responsible data issues, things are rarely black and white.

The team behind the Panama Papers decided not to publish their original source documents, with ICIJ director Gerard Ryle quoted in WIRED as saying “We’re not WikiLeaks. We’re trying to show that journalism can be done responsibly.” Discussion on the Responsible Data mailing list revealed multiple perspectives on the issue. Some thought that full transparency of the source documents would have been a better decision, while to others trusted that the team had responsibly taken the decision not to publish for a reason.

Ultimately, as Howard and Wonderlich also outline: protecting the privacy of individuals reflected in data that has been made available through whistleblowing should be of utmost importance.

Trust and responsibility

Ultimately, what underlines many of these concerns is trust. For the whistleblower releasing information, it is critical to ensure trust in a secure process of putting those leaks to use. It requires a great deal of faith to ask someone whose trust has been betrayed by an institution to then place it within journalists, researchers or activists who they might not personally know.

For a media outlet reporting on data leaks or data breaches of unknown provenance, responsibility is key. Beyond this, individuals need to think carefully about their responsibilities when using and accessing the data, and making it available for others to use in the future.

- What can we do as a community to encourage transparent, explicit communications around those decisions?

- To make it easier for a whistleblower to trust that transparent and responsible processes will be followed, with a duty of care towards both the public interest, and the rights of the people reflected in the data?

- What are ethical ways of reporting on data leaks whose provenance is unknown, or whose provenance is known to be from dubious actors using dubious tactics?

- How can media effectively make decisions and communicate those decisions to its consumers when reporting on data leaks and using them in their reporting?

To join in responsible data conversations on this topic and more, join us on the Responsible Data mailing list.

2 thoughts on "Responsible Data Leaks and Whistleblowing"